Do you want more traffic?

We at Traffixa are determined to make a business grow. My only question is, will it be yours?

Get a free website audit

Enter a your website URL and get a

Free website Audit

Take your digital marketing to the next level with data-driven strategies and innovative solutions. Let’s create something amazing together!

Case Studies

Let’s build a custom digital strategy tailored to your business goals and market challenges.

Danish Khan is a digital marketing strategist and founder of Traffixa who takes pride in sharing actionable insights on SEO, AI, and business growth.

In today’s complex digital landscape, marketing teams rely on a dizzying array of tools. From advertising platforms and CRMs to analytics suites and email automation systems, each tool generates valuable streams of data. However, this data often exists in isolated silos, making it nearly impossible to obtain a complete and trustworthy view of marketing performance. A marketing data warehouse solves this fundamental challenge by creating a centralized, reliable repository for all marketing data.

It acts as the foundational layer of a modern marketing data stack, enabling sophisticated analytics, comprehensive reporting, and data-driven activation that is not possible when data is fragmented. By bringing everything together, you can answer critical business questions like, “What is the true return on ad spend across all channels?” or “What sequence of touchpoints leads to our most valuable customers?”

A marketing data warehouse is a system designed specifically for analytics and reporting. It consolidates data from disparate sources, transforms it into a clean and consistent format, and stores it for accessible querying. The ultimate goal is to establish a “Single Source of Truth” (SSOT), ensuring that everyone in the organization—from the CMO to a channel manager—uses the same underlying data for decision-making. This eliminates the chronic problem of conflicting metrics, such as when Facebook Ads reports 100 conversions and Google Analytics reports 80 for the same campaign. Within a data warehouse, you define the logic for key metrics like “conversion” once, apply it consistently to all raw data, and produce a single, reliable figure that everyone trusts. This trust is the cornerstone of a data-driven culture.

The traditional approach to marketing analytics involves manually exporting CSV files from various platforms and stitching them together in spreadsheets. This process is not only tedious and time-consuming but also highly prone to human error. It creates a fragile system where reports are perpetually out of date and insights are shallow. Every new question requires a new manual data-pulling exercise, stifling curiosity and proactive analysis.

A centralized system built around a marketing data warehouse represents a paradigm shift. Data flows automatically from sources into the warehouse via data pipelines, where it is cleaned, modeled, and prepared for analysis on a recurring schedule. This approach frees analytics talent from mundane data wrangling to focus on high-value activities like attribution modeling, customer segmentation, and performance forecasting. It transforms marketing analytics from a reactive, report-generating function into a proactive, strategic engine for growth.

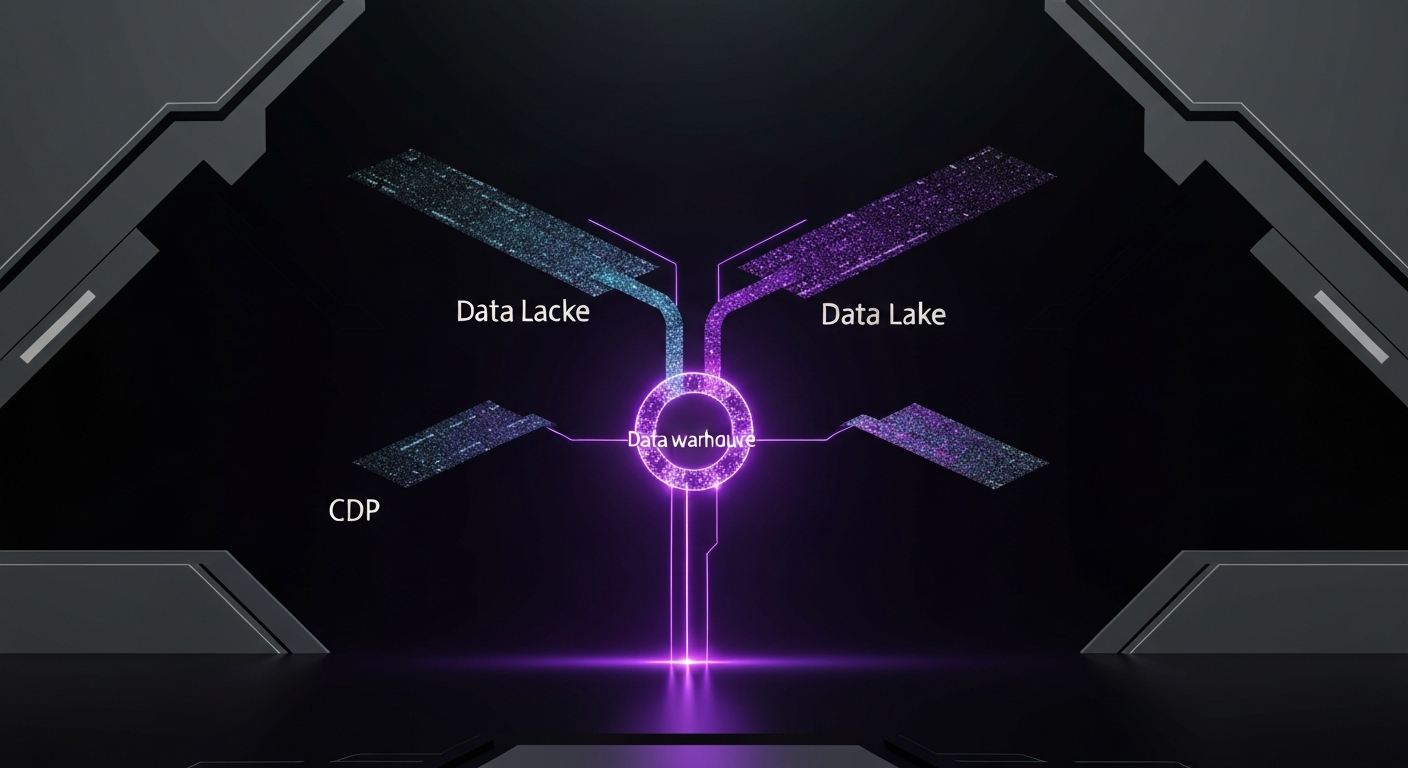

When planning a centralized data system, you will encounter several key terms: data warehouse, data lake, and Customer Data Platform (CDP). While they all manage data, their purpose, structure, and ideal use cases differ significantly. Understanding these differences is crucial for building an effective and efficient marketing data stack.

Each platform is designed to solve a different kind of data problem. A data warehouse prioritizes structured data for business intelligence, a data lake handles raw data at scale for data science, and a CDP focuses on unifying customer profiles for marketer-led activation.

| Feature | Data Warehouse | Data Lake | Customer Data Platform (CDP) |

|---|---|---|---|

| Primary Purpose | Business Intelligence (BI) and complex analytics on historical data. | Storage of vast amounts of raw, unstructured data for data science and machine learning. | Creating a unified customer profile for real-time segmentation and marketing activation. |

| Data Structure | Schema-on-write: Data is structured and modeled before being stored. | Schema-on-read: Data is stored in its raw, native format. Structure is applied when it’s read. | Primarily focused on a specific customer-centric schema. |

| Primary Users | Data analysts, business analysts, and data scientists. | Data scientists, data engineers, and machine learning engineers. | Marketers, product managers, and customer support teams. |

| Data Types | Structured and semi-structured data (e.g., tables, JSON). | Any type of data: structured, semi-structured, and unstructured (e.g., logs, images, videos). | Customer-related data: events, attributes, and transactional data. |

| Speed & Focus | Optimized for complex queries and deep analysis. Batch-oriented. | Optimized for low-cost storage and processing flexibility. | Optimized for real-time identity resolution and audience syncing to other tools. |

These platforms are not mutually exclusive; they can work together in a cohesive stack.

This is a frequently debated topic in the modern data stack. Traditionally, the answer was no, as CDPs offered marketer-friendly interfaces and real-time identity resolution that warehouses could not match. However, with the rise of powerful cloud data warehouses and a new category of tools called “Reverse ETL,” the distinction has become less clear. The concept of the “Composable CDP” has emerged, where the data warehouse serves as the central customer record. Data is ingested and modeled in the warehouse, and Reverse ETL tools then sync audiences and attributes from the warehouse to operational tools. This warehouse-native approach can offer greater flexibility, avoid data duplication, and provide a more powerful foundation than a traditional, packaged CDP. While it requires more technical expertise to set up, it gives data teams full control and leverages their existing investment in the warehouse.

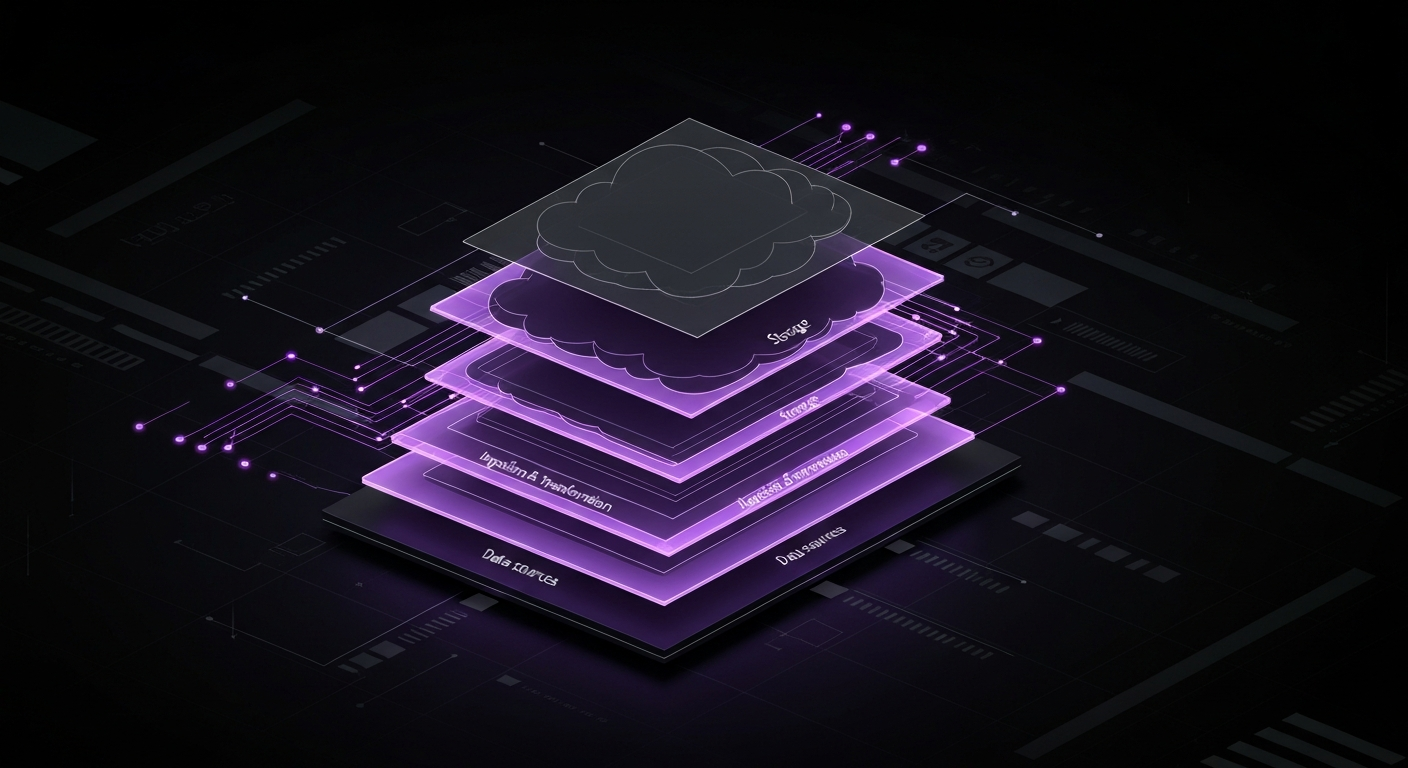

A modern marketing data warehouse is not a single product but an interconnected system of technologies, each playing a specific role. Understanding this architecture is key to building a robust and scalable solution. We can break it down into four distinct layers.

This top layer represents the universe of tools and platforms where your marketing data is generated. The goal of the data warehouse is to centralize the information from this fragmented layer. Sources typically include:

This middle layer is responsible for moving data from the source layer to the storage layer, acting as the plumbing of your data architecture. The dominant paradigm today is ELT (Extract, Load, Transform), a shift from the older ETL (Extract, Transform, Load) model. In an ELT workflow, raw data is first extracted from the source and loaded directly into the data warehouse. The transformation—cleaning, joining, and modeling the data for analysis—happens inside the warehouse itself, leveraging its powerful processing engine. This approach is more flexible and scalable for handling the large and varied datasets common in marketing.

This is the core of the architecture—the central repository where all your structured and semi-structured data lives. Modern cloud data warehouses are the standard choice here. They are designed for analytical queries and can scale compute and storage resources independently and automatically. They provide a SQL interface for querying data, making it accessible to a wide range of analysts and tools. The leading platforms in this space are Snowflake, Google BigQuery, and Amazon Redshift.

This is the final layer where data is turned into value. It is how users interact with the information stored in the warehouse.

Before writing any code or selecting any tools, the most critical step is to define what you want to achieve. A data warehouse project that begins with technology instead of strategy is unlikely to succeed. The goal is not merely to collect data, but to answer important questions and drive better business outcomes. Begin by collaborating with marketing leadership and key stakeholders to identify the most pressing challenges and unanswered questions.

Ask questions like:

From this discussion, you can distill clear business objectives. For example:

Once you have your objectives, translate them into specific Key Performance Indicators (KPIs) that the data warehouse must be able to calculate. This process provides clear technical requirements. For example, building a multi-touch attribution model requires tracking every marketing touchpoint for each user over time. This immediately informs you which data sources are necessary and how the data needs to be structured.

With your objectives defined, you can now conduct an audit of your marketing technology stack to identify the specific data sources needed to answer your questions. The goal is to create a priority list. You do not need to integrate everything at once. Start with the sources that will provide the most value for your initial objectives and expand from there. Here are the most common and critical categories.

This is the source of truth for on-site and in-app user behavior. This data tells you how users are interacting with your digital properties. Key data points include sessions, pageviews, events (like button clicks or form submissions), user attributes (like device type and location), and traffic source information.

Your CRM contains invaluable information about your leads, contacts, and customers. This is where you find data on lead status, sales pipeline stages, deal sizes, account ownership, and all interactions logged by your sales team. Integrating CRM data is essential for connecting marketing efforts to sales outcomes and revenue.

To understand marketing efficiency, you must centralize your spend and performance data from all paid channels. This includes metrics like impressions, clicks, cost, and conversions at the campaign, ad set, and ad level. Bringing this data into the warehouse allows you to compare performance across platforms on an apples-to-apples basis.

Data from tools like Marketo, Braze, or Mailchimp provides insight into your owned marketing channels. Integrating this data allows you to track email sends, opens, clicks, and unsubscribes, and incorporate these touchpoints into your customer journey analysis.

This is often the most valuable and highest-quality data source. Your production database contains the ground truth about your business: user signups, product usage, subscription events, orders, and revenue. Joining this transactional data with marketing data from other sources is what unlocks the most powerful insights, such as calculating the true LTV of customers acquired from different marketing campaigns.

The storage layer is the foundation of your marketing data stack, and choosing the right cloud data warehouse platform is a major decision. The market is dominated by three main players: Snowflake, Google BigQuery, and Amazon Redshift. While they all serve the same core purpose, they have different architectures, pricing models, and ecosystem strengths.

Your choice will depend on your existing cloud infrastructure, budget, and technical team’s expertise. Here is a high-level comparison to guide your decision:

| Feature | Snowflake | Google BigQuery | Amazon Redshift |

|---|---|---|---|

| Architecture | Decoupled storage and compute. Allows independent scaling of resources. Multi-cluster architecture. | Serverless architecture. Google manages all resources behind the scenes. Fully decoupled. | Node-based architecture. Compute and storage are coupled, though recent versions offer more decoupling. |

| Pricing Model | Pay-per-second for compute (virtual warehouses) and per-TB for storage. Very granular. | Pay-per-query (bytes processed) or flat-rate slots. Storage is separate and inexpensive. | Pay per hour for nodes (compute/storage clusters). Offers reserved instances for discounts. |

| Ease of Use | Considered very easy to set up and manage. SQL-based. Excellent UI and near-zero maintenance. | Extremely easy to get started due to its serverless nature. No infrastructure to manage. | Can be more complex to manage, requiring tuning of clusters and distribution keys for optimal performance. |

| Ecosystem Integration | Strong, platform-agnostic ecosystem. Connects easily to tools across all major clouds (AWS, GCP, Azure). | Deeply integrated with the Google Cloud Platform (GCP) ecosystem (e.g., Looker, AI Platform). | Deeply integrated with the Amazon Web Services (AWS) ecosystem (e.g., S3, Glue, Quicksight). |

| Key Strengths | Flexibility, ease of use, and multi-cloud support. Excellent for data sharing. | Simplicity, serverless power, and strong integration with Google’s data and ML tools. | Performance for large-scale BI, cost-effectiveness for predictable workloads, and deep AWS integration. |

When making your selection, consider the following:

Once you have selected your data warehouse, the next step is to build the pipelines that will populate it with data. This is the domain of ELT (Extract, Load, Transform). This process involves pulling data from your sources, loading it into the warehouse, and then transforming it into clean, analysis-ready models.

Building and maintaining data pipelines from scratch is a complex engineering task. Each source API, like those for Google Ads or Salesforce, has unique authentication methods, data formats, and rate limits. This is where off-the-shelf ELT tools provide immense value. Platforms like Fivetran, Stitch, or Airbyte offer hundreds of pre-built connectors that automate the “Extract” and “Load” parts of the process. You simply provide your credentials, and the tool manages the entire process of pulling data and loading it into your warehouse, including handling API changes and schema updates. Using an ELT tool can save significant engineering time, allowing your team to focus on the more valuable transformation and analysis steps.

After your raw data has been loaded into the warehouse, it needs to be transformed. The raw data is often messy, with inconsistent naming conventions and different structures. The “Transform” step is where you clean, join, and aggregate this data into a logical structure for analytics. This is typically done using SQL.

The modern standard for managing these SQL transformations is a tool called dbt (data build tool). dbt allows you to apply software engineering best practices to your analytics code. With dbt, you can:

dbt has become an essential part of the modern data stack, transforming analytics from a series of ad-hoc scripts into a reliable and maintainable engineering discipline.

Your data pipelines need to run automatically on a regular schedule to keep your warehouse up-to-date. Most ELT tools have built-in schedulers that allow you to set your data syncs to run hourly or daily. For the transformation step, dbt can be run on a schedule using tools like dbt Cloud or workflow orchestrators like Airflow. Automating this entire process ensures that your stakeholders always have access to fresh, reliable data without any manual intervention.

Data modeling is the process of designing how your data is organized and related within your warehouse. A well-designed data model is the difference between a data warehouse that is fast, intuitive, and easy to query, and one that is slow, confusing, and unusable. For marketing analytics, the most common and effective approach is dimensional modeling.

Dimensional modeling organizes data into “fact” tables and “dimension” tables. This structure is often called a star schema.

A star schema consists of a central fact table connected to multiple dimension tables, resembling a star. A snowflake schema is a more normalized variation where dimensions are broken down into further sub-dimensions. For most marketing use cases, a star schema provides the best balance of performance and ease of use.

When building your marketing data model, you will create several core tables:

By joining these tables, you can easily answer complex questions. For instance, to find the total revenue from campaigns run on Facebook in Q2, you would join `fct_orders` with `dim_campaigns` and `dim_dates`.

The ultimate goal for many marketing data warehouses is to understand the full customer journey. This requires creating a unified event stream model. This involves taking all touchpoint data—ad clicks, email opens, web visits, form fills—from various sources and stitching them together into a single, chronological table with a common `user_id` and a `timestamp`. This `fct_user_touchpoints` table becomes the foundation for building any type of multi-touch attribution model (e.g., linear, time-decay, or U-shaped) and for analyzing the paths users take on their journey to conversion.

With a well-modeled data warehouse in place, the final step is to make the data accessible and actionable. This is accomplished by connecting tools for visualization (Business Intelligence) and operationalization (Reverse ETL). This layer is where the return on your data warehouse investment is realized.

Business Intelligence (BI) platforms are the primary interface for most users to interact with the data warehouse. Tools like Tableau, Looker, Power BI, or Metabase connect directly to your warehouse and provide a user-friendly environment for data exploration and visualization. They allow analysts and marketing stakeholders to:

A good BI tool democratizes data access, empowering the marketing team to self-serve their own data needs and fostering a culture of data-informed decision-making.

While BI tools are for pulling insights *out* of the warehouse for humans to analyze, Reverse ETL tools are for pushing enriched data *back* into the operational tools that marketers use every day. This is the concept of “operational analytics,” and it is what makes your data truly actionable.

For example, within your data warehouse, you can use all your centralized data to build powerful customer models:

With a Reverse ETL tool like Census or Hightouch, you can then sync these scores and segments from your warehouse directly into your other tools. You could send the lead score to Salesforce for sales prioritization, sync the churn risk segment to your marketing automation platform for a re-engagement campaign, and push the LTV value to Google Ads to create more effective lookalike audiences. This closes the data loop and allows you to directly leverage your deepest insights to power smarter, more personalized marketing.

Building a marketing data warehouse is a transformative project, but it is not without its challenges. Being aware of potential hurdles from the outset can help you plan effectively and ensure the long-term success of your initiative.

The most common pitfall is the “garbage in, garbage out” syndrome. If the data entering your warehouse is inaccurate or inconsistent, the insights derived from it will be worthless. Trust in the data will erode, and the project will fail.

Cloud data warehouses operate on a pay-as-you-go model, which offers great flexibility but can lead to spiraling costs if not managed carefully. Inefficient queries, unnecessary data processing, and oversized compute resources can quickly inflate your bill.

Often, the biggest challenges are not technical but organizational. Data teams and marketing teams speak different languages and have different priorities. The data team might be focused on technical purity and scalability, while the marketing team needs quick, actionable insights to hit its targets.

A marketing data warehouse is not just a tool for historical reporting; it is a strategic foundation for the future of data-driven marketing. As you mature your data infrastructure, you unlock capabilities that were previously out of reach, paving the way for more sophisticated and automated marketing strategies.

The centralized and clean data in your warehouse is the ideal fuel for Artificial Intelligence (AI) and Machine Learning (ML) models. Instead of being a separate data science activity, ML can be integrated directly into your data workflow. You can build predictive models within modern cloud data warehouses to forecast customer lifetime value, predict churn probability, or identify users most likely to convert. The outputs of these models can then be operationalized via Reverse ETL to drive proactive marketing campaigns.

Furthermore, the industry is moving toward real-time data processing. While daily or hourly batch updates are sufficient for most strategic analysis, the demand for immediate insights is growing. Technologies like Snowpipe Streaming and integrations with event-streaming platforms like Kafka are making it easier to ingest data into the warehouse in near real-time. This enables use cases such as real-time dashboard updates, immediate fraud detection, and instant personalization based on a user’s current actions. The marketing data warehouse is evolving from a static repository of historical data into a dynamic, intelligent hub that powers the next generation of marketing.

A marketing data warehouse is a central repository for structured and semi-structured data from various sources, designed for complex querying and analysis. A CDP is typically more focused on creating unified customer profiles for real-time activation by marketers. While they have overlapping functions, a data warehouse offers more flexibility for deep, custom analytics.

Costs vary significantly based on data volume, platform choice, and tooling. Key expenses include the cloud warehouse platform (often pay-per-query/storage), data ingestion tools (monthly subscription), transformation tools, and personnel costs for data engineers or analysts.

While modern tools have made the process more accessible, having technical expertise is crucial. A data engineer or a technical analyst with strong SQL skills is typically required for data modeling, pipeline management, and ensuring data integrity. Simpler setups may be manageable for a tech-savvy marketer.

ELT stands for Extract, Load, Transform. Unlike the traditional ETL, data is loaded into the warehouse *before* being transformed. This approach leverages the powerful processing capabilities of modern cloud data warehouses, offering more flexibility and speed for handling large, diverse marketing datasets.

Start by clearly defining your business goals. Identify the key questions you need to answer (e.g., ‘What is our true customer lifetime value?’, ‘Which marketing channel has the highest ROI?’). This will guide which data sources to integrate and how to model your data for effective analysis.

Absolutely. A data warehouse is an ideal environment for advanced attribution modeling. By centralizing touchpoints from all channels (ads, email, web visits, CRM), you can build custom multi-touch attribution models that go far beyond the capabilities of individual platform analytics.

About the author:

Digital Marketing Strategist

Danish is the founder of Traffixa and a digital marketing expert who takes pride in sharing practical, real-world insights on SEO, AI, and business growth. He focuses on simplifying complex strategies into actionable knowledge that helps businesses scale effectively in today’s competitive digital landscape.

Traffixa provides everything your brand needs to succeed online. Partner with us and experience smart, ROI-focused digital growth